traceparent header carries the trace context across the wire. Galileo joins all spans that share a trace ID into a single trace.

If both services are Python and use the Galileo SDK directly, Distributed Tracing (Beta) is simpler. Use the OTel approach when your services use different agent frameworks (Microsoft Agent Framework, Google ADK, LangChain, etc.) or different languages.

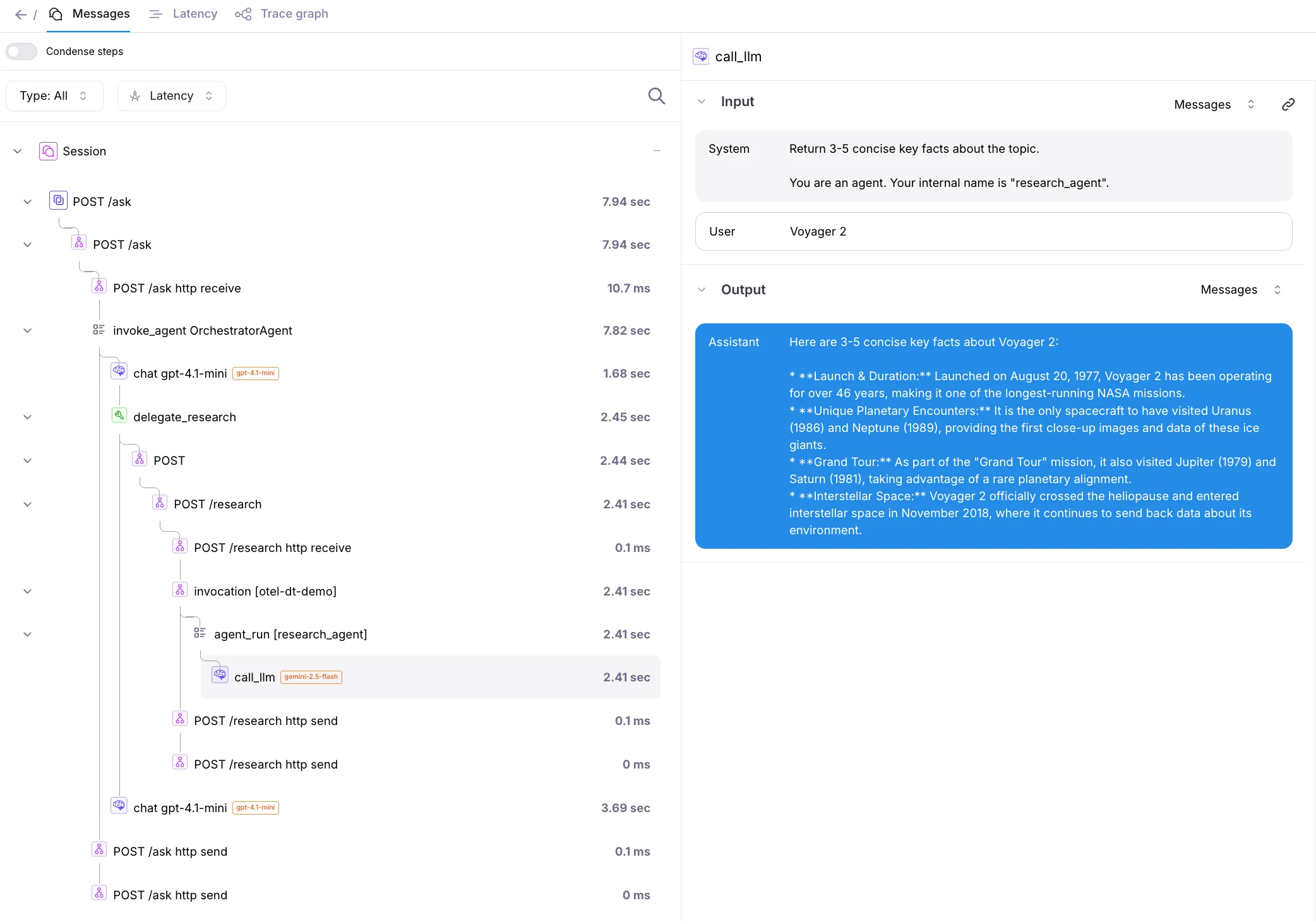

What you’ll see in Galileo

A single user request to Agent A that delegates to Agent B produces one trace:Setup

.env

requirements.txt

GalileoSpanProcessor reads GALILEO_API_KEY, GALILEO_PROJECT, and GALILEO_LOG_STREAM from the environment and exports OTLP directly to Galileo’s OTel endpoint. No collector required.

Agent A: Microsoft Agent Framework, calls Agent B

agent_a.py

Agent B: Google ADK research agent

agent_b.py

Run it

otel-distributed-tracing project in Galileo, Log stream default. You’ll see one trace covering both agents:

Self-hosted Galileo

The OTel endpoint is different from Galileo’s regular API endpoint and is specifically designed to receive telemetry data in the OTLP format. If you are using:-

Galileo Cloud at app.galileo.ai, then you don’t need to provide a custom OTel endpoint.

The default endpoint

https://api.galileo.ai/otel/traceswill be used automatically. -

A self-hosted Galileo deployment, replace the

https://api.galileo.ai/otel/tracesendpoint with your deployment URL. The format of this URL is based on your console URL, replacingconsolewithapiand appending/otel/traces.

- if your console URL is

https://console.galileo.example.com, the OTel endpoint would behttps://api.galileo.example.com/otel/traces - if your console URL is

https://console-galileo.apps.mycompany.com, the OTel endpoint would behttps://api-galileo.apps.mycompany.com/otel/traces

GALILEO_API_ENDPOINT in .env; GalileoSpanProcessor picks it up automatically.

Related

- Distributed Tracing (Beta): Galileo’s native Python SDK distributed mode.

- Microsoft Agent Framework integration

- Google ADK (OpenTelemetry) integration

- Java + Python OTel distributed tracing example: End-to-end runnable example with a Java Spring Boot gateway and a Python FastAPI + LangGraph RAG service.