Documentation Index

Fetch the complete documentation index at: https://docs.galileo.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

This guide shows you how to create a custom local metric in Python to use in an experiment.

In this example, you will be creating a metric to rate the brevity (shortness) of an LLM’s response based on word count. The sample code to run the experiment will use OpenAI as an LLM.

In this guide you will:

-

Set up a project with Galileo

-

Create your local metric

-

Prepare the experiment

-

Run the experiment

Before you start

To complete this how-to, you will need:

Install dependencies

To use Galileo, you need to install some package dependencies, and configure environment variables.

Install Required Dependencies

Install the required dependencies for your app. Create a virtual environment using your preferred method, then install dependencies inside that environment:pip install "galileo[openai]" python-dotenv

Create a .env file, and add the following values

# Your Galileo API key

GALILEO_API_KEY="your-galileo-api-key"

# Your Galileo project name

GALILEO_PROJECT="your-galileo-project-name"

# The name of the Log stream you want to use for logging

GALILEO_LOG_STREAM="your-galileo-log-stream"

# Provide the console url below if you are using a

# custom deployment, and not using the free tier, or app.galileo.ai.

# This will look something like “console.galileo.yourcompany.com”.

# GALILEO_CONSOLE_URL="your-galileo-console-url"

# OpenAI properties

OPENAI_API_KEY="your-openai-api-key"

# Optional. The base URL of your OpenAI deployment.

# Leave this commented out if you are using the default OpenAI API.

# OPENAI_BASE_URL="your-openai-base-url-here"

# Optional. Your OpenAI organization.

# OPENAI_ORGANIZATION="your-openai-organization-here"

This assumes you are using a free Galileo account. If you are using a custom deployment, then you will also need to add the URL of your Galileo Console:GALILEO_CONSOLE_URL=your-Galileo-console-URL

Create your local metric

Create a file for your experiment called experiment.py.

Create a scorer function

The Scorer Function assigns one of three ranks — "Terse", "Temperate", or "Talkative", depending on how many words the model outputs. Add this code to your experiment.py file.from galileo import Trace, Span

def brevity_rank(step: Span | Trace) -> str:

"""

Rank response brevity based on word count.

"""

word_count = len(step.output.content.split(" "))

if word_count <= 3:

return "Terse"

if word_count <= 5:

return "Temperate"

return "Talkative"

Create the local metric configuration

Here, we tell Galileo that our custom metric returns a str. We give it a name (“Terseness”), then assign the Scorer. Add this code to your experiment.py file.from galileo.schema.metrics import LocalMetricConfig

terseness = LocalMetricConfig[str](

# Metric name (shown as a column in Galileo)

name="Terseness",

# Scorer Function defined above

scorer_fn=brevity_rank

)

Prepare the experiment

For this example, we’ll ask the LLM to specify the continent of four countries, encouraging it to be succinct.

Create a dataset

Create a dataset of inputs to the experiment by adding this code to your experiment.py file.# Simple dataset with four countries:

countries_dataset = [

{"input": "Indonesia"},

{"input": "New Zealand"},

{"input": "Greenland"},

{"input": "China"},

]

Call the LLM

Next you need a custom function to be called by your experiment. Add this code to your experiment.py file.from galileo.openai import openai

# The function that calls the LLM for each input:

def llm_call(input):

client = openai.OpenAI(api_key=os.environ["OPENAI_API_KEY"])

return (

client.chat.completions.create(

model="gpt-4o",

messages=[

{

"role": "system",

"content": """

You are a geography expert.

Always answer as succinctly as possible.

"""

},

{

"role": "user",

"content": f"""

Which continent does the following

country belong to: {input}

"""

},

],

)

.choices[0]

.message.content

)

Add code to run the experiment

Finally, add code to run the experiment using your dataset and custom local metric.import os

from galileo.experiments import run_experiment

# Run the Experiment!

results = run_experiment(

"terseness-local-metric",

dataset=countries_dataset,

function=llm_call,

metrics=[terseness],

project=os.environ["GALILEO_PROJECT"],

)

Run the experiment

Now your experiment is set up, you can run it to see the results of your local metric.

Run the experiment code

When the experiment runs, it will output a link to view the results in the terminal.(.venv) ➜ python experiment.py

Experiment terseness-local-metric has completed and results are available at https://console.galileo.ai//project/xxx/experiments/xxx

View the experiment

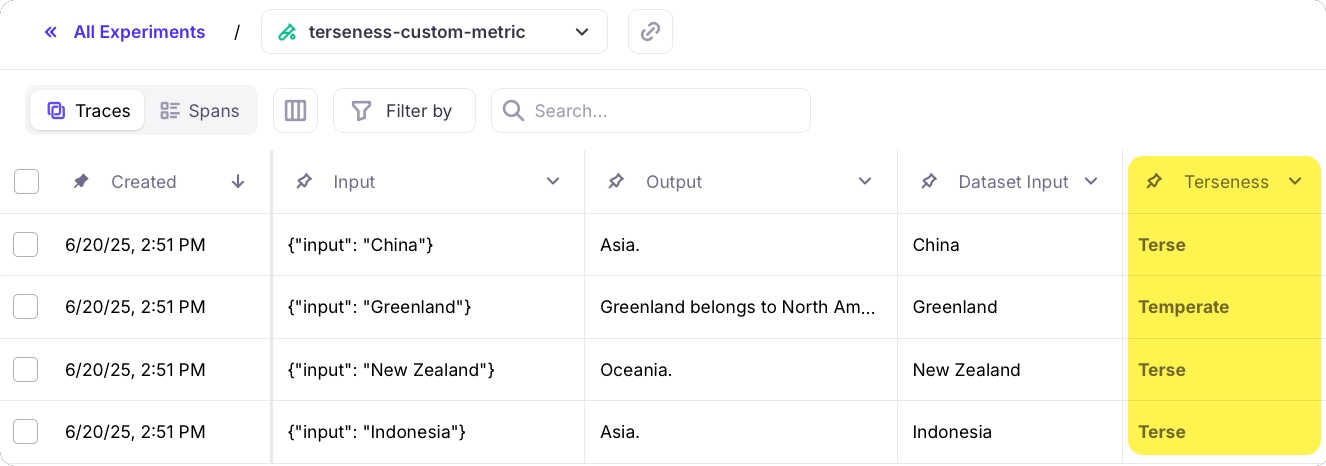

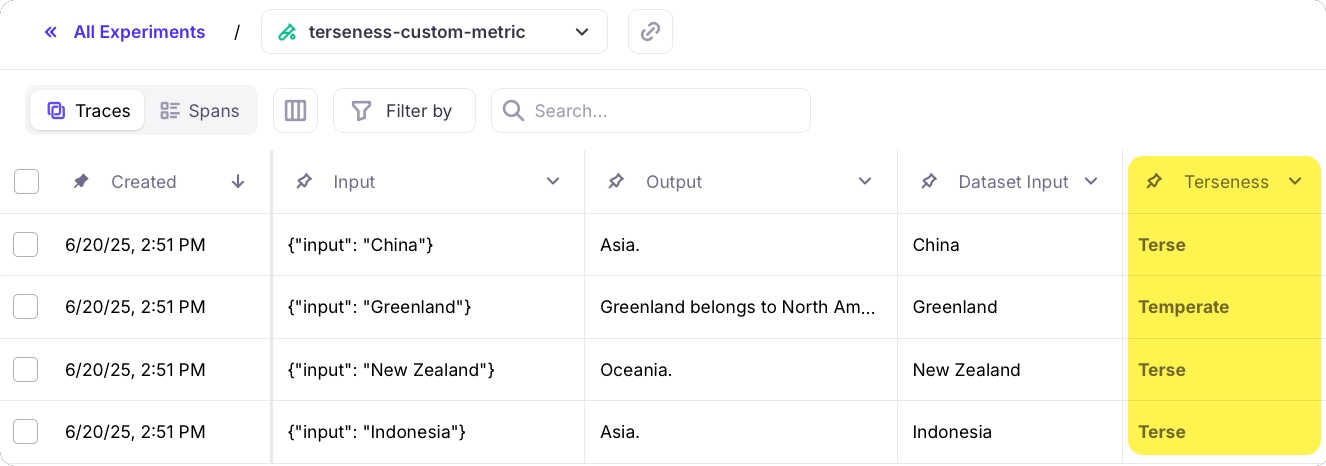

Follow the link in your terminal to view the results of the experiment. This experiment has 4 rows - one per item in the dataset.The new Terseness metric is available in both the Traces table, and from the metrics pane when selecting a row.

See also